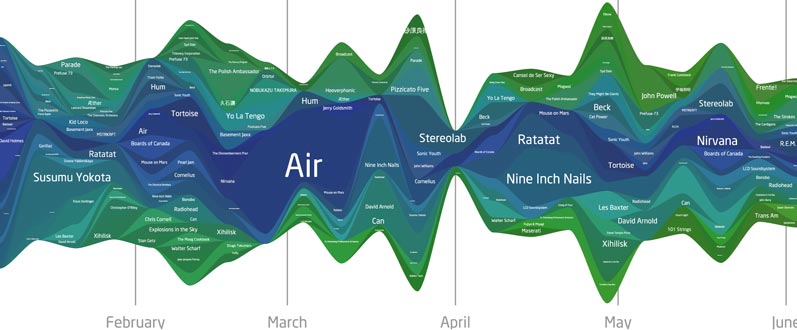

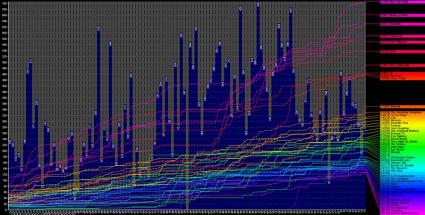

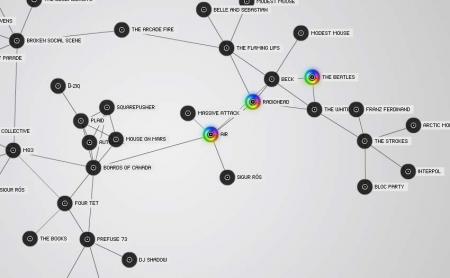

When it comes to my iTunes library, I’m a regular statistics nut. Sure, my library exists primarily for my own enjoyment, but it contains so much organically-compiled data about my habits and tastes that I can’t help but want to take a look at it and find out what the data says about my interests.

But for a while now, I’ve struggled to quantify, tabulate and analyze the overall sense of my library. Which of my albums albums are truly the greatest? Which artists, when the sum of their parts are combined, are really my favorites? And by how much? I want numbers.

None of the iTunes stats options available at the moment give me the type of results that I want. The Album Ranking AppleScript provides a simple average that skews toward albums with fewer tracks. SuperAnalyzer provides a top 10 list that is skewed toward albums with more tracks.

Most iTunes stats tools simply provide averages or totals of play counts and/or star ratings. Averages, while somewhat useful, can be misleading. An album could have a handful of awesome songs and a bunch of filler and still rank as well as and album that’s consistently good, but without much breakout material.

And that can be frustrating to me, because, in terms of album or artist worth, I tend to value the ones with consistent performance.

Take, for example, my recent run-down of Air’s discography, specifically the albums 10000 Hz Legend and The Virgin Suicides. After many years of listening, my artistic impression is that Virgin Suicides is ever so slightly the better of the two. The songs on Legend vary from excellent to clunkers. Suicides is overall pretty good, with only one exceptional track. However, averaging my ratings shows that Suicides is a 3.85 while Legend rates as an even 4.

So, to reward albums that don’t veer wildly around the quality wheel, I’ve developed my own album rating formula that takes into account the consistency of all the star ratings on a given album.

The Formula

album rating = (mean of all songs + median of all songs) - standard deviation of the set

The mean sums up the whole of the album. The median shows the state of the album at its core. The standard deviation indicates the variety of the individual ratings. The result is a number on a scale of 1 to 10. (Alternately, divide that number by 2 to return the result to a 5-star scale).

Let’s take a look at the formula in action. Suppose we have two albums with twelve songs each. The first is generally excellent, but varies in quality. The second is good stuff throughout.

|

Ex. 1 |

Ex. 2 |

| 5 |

4 |

| 4 |

4 |

| 5 |

4 |

| 2 |

4 |

| 4 |

4 |

| 5 |

4 |

| 5 |

4 |

| 2 |

4 |

| 5 |

4 |

| 3 |

4 |

| 5 |

4 |

| 3 |

4 |

| Mean |

4 |

4 |

| Median |

4.5 |

4 |

|

|

|

| total |

8.5 |

8 |

|

|

|

| STDEV |

1.21 |

0 |

|

|

|

| Score |

7.29 |

8 |

This table shows the individual star ratings for the two theoretical albums, as well as all the statistical data, as calculated by Excel. As you can see, both albums average score is the same (4) and Ex 1 even has a higher median than Ex 2. But, because the quality of Ex 1’s songs vary a great deal, its standard deviation is substantial, so much so that its album rating becomes 7.29 (or 3.645 on a 5-star scale) when my formula is applied. Ex 2’s score suffers no penalty and its score remains 8 (4). In this case, the standard deviation awarded Ex 2 a bonus for being of uniform quality.

Let’s take a real world example, the two Air albums I mentioned above.

|

10 kHz Legend |

Virgin Suicides |

| 4 |

4 |

| 5 |

4 |

| 4 |

4 |

| 5 |

3 |

| 5 |

3 |

| 4 |

4 |

| 3 |

5 |

| 4 |

4 |

| 3 |

4 |

| 3 |

4 |

| 4 |

4 |

|

4 |

|

3 |

| Mean |

4 |

3.84 |

| Median |

4 |

4 |

|

|

|

| total |

8 |

7.84 |

|

|

|

| STDEV |

0.77 |

0.55 |

|

|

|

| Score |

7.23 |

7.29 |

When the formula is applied to my ratings for each, the scores for 10000 Hz Legend and The Virgin Suicides become 7.23 (3.62) and 7.29 (3.65), respectively. So factoring in the standard deviation results in a score that more closely reflect my thoughts of those two albums.

So what does this mean? I’m not sure exactly. In practice, I could whip up some listy goodness and see which albums are truly my favorites. A comprehensive analysis would be cool. I’d love to see the distribution of my album ratings. However, that would require more programming skills than I have. Though that could be a good project to help me learn.

Out of curiosity though, I have picked 10 albums, just to see how they rate. One provision, of course, is that every song on an album must have a rating before the album score can be calculated. These ratings are on a 5-star scale.

|

AVG |

My Score |

| Radiohead – OK Computer |

4.5 |

4.41 |

| Air [french band] – Moon Safari |

4.5 |

4.39 |

| Nirvana – Nevermind |

4.5 |

4.24 |

| Mouse on Mars – Radical Connector |

4.33 |

4.23 |

| Ratatat – Ratatat |

4.45 |

3.97 |

| Nine Inch Nails – With Teeth |

4.31 |

3.77 |

| The Strokes – Is this it? |

4.09 |

3.7 |

| LCD Soundsystem – LCD Soundsystem |

4 |

3.68 |

| Basement Jaxx – Remedy |

3.73 |

3.51 |

| Prefuse 73 – One Word Extinguisher |

3.82 |

3.47 |

| Weezer – Make Believe |

3.58 |

3.21 |

This is by no means a top 10 list, but it is interesting to see where things ended up. It’s also interesting to see how minor fluctuations in star ratings can change the final score. For instance, if that Ratatat album had one more 5 star song in place of a 4 star song, its median number would become 5 and its album score would jump to 4.51. Lower a 5 star to a 4 star and the score only drops slightly to 3.93. I don’t know if this is a flaw in the formula or a reward for albums that have a lot of good songs.

Problems and issues

Small data sets. These are troublesome in all statistical circumstances and this formula is no different. Albums with only one song will, by definition, not have a mean, median or standard deviation, and that kills the formula with a divide-by-zero error. Also, because the formula uses the average rating as a component, albums with a low number of songs will tend to skew one way or the other.

In my library, Boards of Canada’s EP In A Beautiful Place Out In The Country has four fantastic songs and ranks at 4.63, higher than anything on that list above. As a release, I’d say that’s accurate, but I’m sure it doesn’t surpass OK Computer. I would be interested to see a chart of how the album score changes as the number of tracks on an album increases.

Additionally, I haven’t figured out a way to rank partial albums, i.e. albums where I either don’t own all the songs or albums where I’ve deleted songs I didn’t like. For now, I’m just excluding them altogether.

Still, I’m fairly pleased with the results I’ve been getting as I run various albums through the formula. It’s working for me and my own song rating system, but I’m curious to see how it works with someone else’s.

Fortunately, Webomatica has posted his song-by-song ratings for The Beatles’ Sgt. Pepper’s Lonely Hearts Club Band. Using his numbers, the average for the album is 4.38, while my formula renders a 4.28. I’d say that’s a consistently good album.

::

Here’s a Microsoft Excel file you can download. Plug in your star ratings to find the album score. AlbumScore.zip